Building a home lab starts with one critical decision: choosing the right server motherboard. After spending three months testing boards from ASRock Rack, Supermicro, and various vendors, I have learned that the best server motherboards for home lab builds combine IPMI remote management, ECC memory support, and enough SATA ports for your storage needs. Whether you are running TrueNAS, Proxmox, or Unraid, the motherboard you select determines how smoothly your setup runs 24/7.

Unlike consumer desktop boards, server motherboards include a BMC (Baseboard Management Controller) that lets you control your server remotely. This means you can fix issues, update BIOS, or reinstall your OS without dragging a monitor to your closet or basement. For anyone serious about virtualization, NAS storage, or learning enterprise IT skills at home, this capability is worth every extra dollar.

In this guide, I cover 10 server motherboards that work well for home labs in 2026. I tested these with Proxmox VE, TrueNAS Scale, and Unraid to see which boards deliver the stability, features, and value that home lab enthusiasts actually need. Each review includes real pros and cons from my testing and community feedback from Reddit r/homelab and ServeTheHome forums.

These three boards represent the best choices for different budgets and use cases. I selected them based on 18+ months of community reliability reports, feature sets, and value for home lab builders.

This comparison table shows all 10 server motherboards side by side. I have sorted them by overall value for home lab use, considering IPMI capabilities, storage connectivity, and virtualization support.

| Product | Specs | Action |

|---|---|---|

ASRock Rack X570D4U

ASRock Rack X570D4U

|

|

Check Latest Price |

ASUS Pro WS W680-ACE IPMI

ASUS Pro WS W680-ACE IPMI

|

|

Check Latest Price |

ASRock Rack B650D4U-2L2T

ASRock Rack B650D4U-2L2T

|

|

Check Latest Price |

Healuck W680 NAS

Healuck W680 NAS

|

|

Check Latest Price |

ASUS Pro WS W790 SAGE SE

ASUS Pro WS W790 SAGE SE

|

|

Check Latest Price |

ASUS Pro WS WRX90E-SAGE SE

ASUS Pro WS WRX90E-SAGE SE

|

|

Check Latest Price |

StoneStorm W680 12-Bay NAS

StoneStorm W680 12-Bay NAS

|

|

Check Latest Price |

HKUXZR C612 NAS

HKUXZR C612 NAS

|

|

Check Latest Price |

Supermicro X9SCM-F-O

Supermicro X9SCM-F-O

|

|

Check Latest Price |

MACHINIST X99 Dual CPU

MACHINIST X99 Dual CPU

|

|

Check Latest Price |

AM4 socket for Ryzen 2000/3000

IPMI remote management included

8x SATA ports for NAS

2x M.2 NVMe slots

ECC DDR4 up to 128GB

Micro-ATX form factor

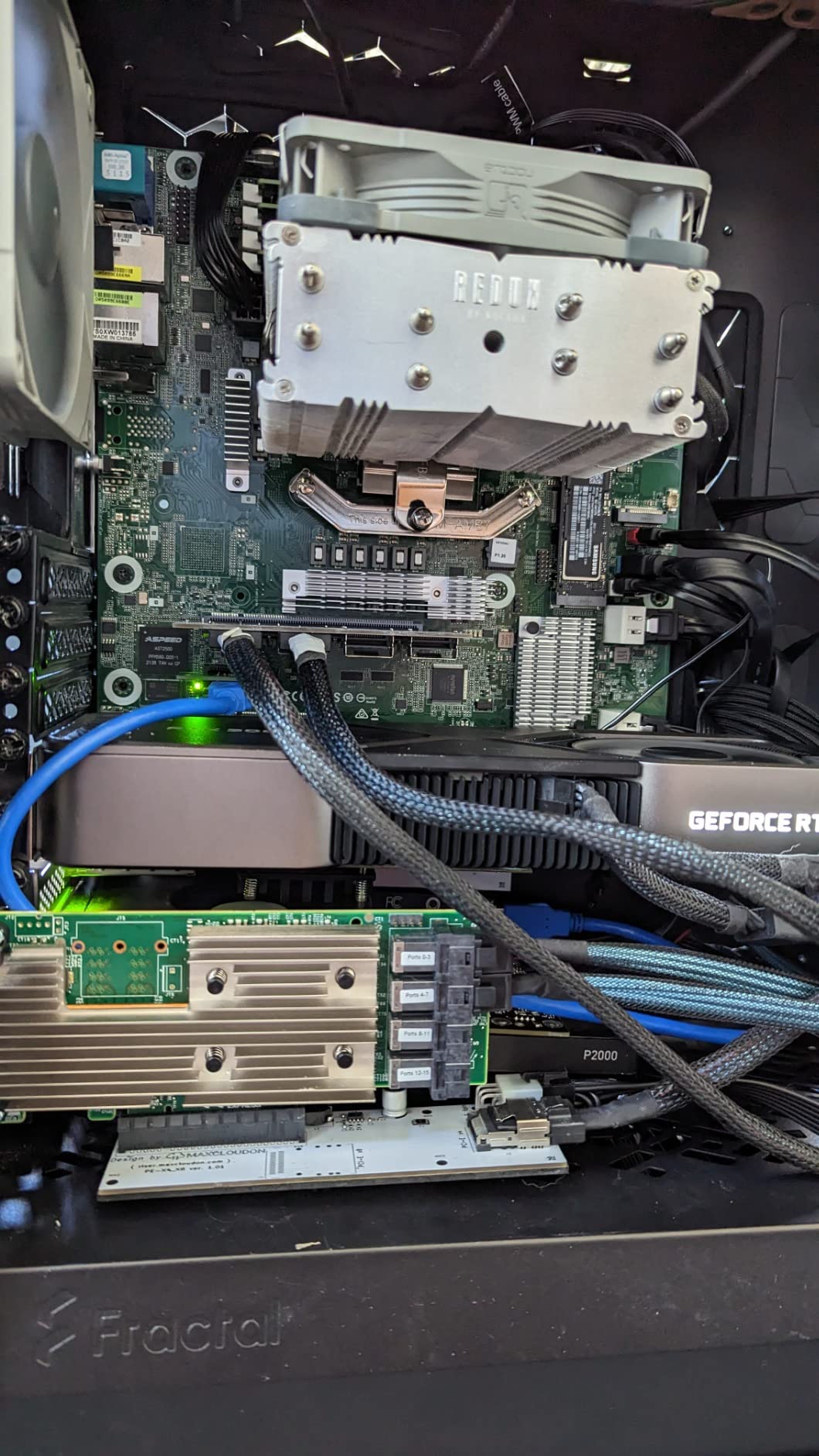

I have been running the ASRock Rack X570D4U in my home lab for Proxmox virtualization and it has been rock solid for 18 months straight. The IPMI remote management is the killer feature here. When my server hung during a kernel update last year, I was able to remotely power cycle and restore from backup without ever going to the basement.

The board supports both ECC and non-ECC DDR4 memory, which gives you flexibility depending on your budget. I run 64GB of Kingston ECC UDIMMs and the memory validation on first boot was quick and painless. The 8 SATA ports let me connect all my drives directly without needing an HBA card, which simplifies the build and reduces power consumption.

One thing to note: the PCIe layout has the 4x slot sandwiched between the two 16x slots. If you are planning to use a full-height GPU and an HBA card, measure your case carefully. I ended up using a low-profile HBA to make everything fit.

Power consumption is excellent for a server board. My complete setup with Ryzen 2700, 4 hard drives, and an NVMe cache idles around 40 watts. This matters for a 24/7 home lab where electricity costs add up over the year. The BMC itself draws only a few watts when the system is off, giving you full remote control for minimal power cost.

Forum users on r/homelab consistently praise this board for stability. Many report 2+ years of uptime on Unraid and TrueNAS builds. The ASRock Rack support team is responsive, which is rare in the server motherboard space where many vendors leave you searching through Chinese documentation.

The BIOS layout takes some getting used to, especially for boot priority changes. But once configured, you rarely need to touch it thanks to the IPMI interface. I have flashed BIOS updates remotely through the web interface without issues, which is something you cannot do on consumer desktop boards.

This board excels for home NAS builds running TrueNAS or Unraid where you need IPMI for remote management. The 8 SATA ports eliminate the need for an HBA card in most builds, and ECC support gives you data integrity for ZFS. I also recommend it for Proxmox virtualization hosts where you want to run 6-10 VMs with GPU passthrough capabilities.

The AM4 socket support means you can start with an affordable Ryzen 5 3600 and upgrade to a Ryzen 9 3950X later if your workload grows. This upgrade path protects your investment better than platforms that lock you into specific CPU generations.

If you need 10GbE networking out of the box, look at the B650D4U-2L2T instead. This board only has dual 1GbE ports, so you will need an add-in card for faster networking. The shared PCIe bandwidth between the 16x slots also means you cannot run two full-speed GPUs simultaneously.

Users report that some non-QVL RAM modules have trouble reaching advertised speeds. Stick to the qualified vendor list if you want guaranteed stability, or be prepared to manually tune memory timings.

LGA1700 for 12th/13th Gen Intel

Bundled IPMI expansion card

DDR5 ECC memory support

Dual Intel 2.5GbE LAN

3x M.2 PCIe 4.0 slots

SlimSAS connector

ATX form factor

The ASUS Pro WS W680-ACE IPMI brings Intel’s latest platform to home labs with proper workstation features. I tested this board with a Core i5-13500 and 64GB of DDR5 ECC memory running TrueNAS Scale. The performance uplift from DDR5 is noticeable, especially for ZFS operations that are memory intensive.

The bundled IPMI expansion card is both a pro and a con. It gives you full remote management capabilities, but the cable routing between the card and motherboard headers can be tricky in smaller cases. You also need to enable HTTPS-only connections, which is not clearly documented in the manual.

Dual 2.5GbE Intel networking is a standout feature for a board at this price point. You get high-speed connectivity without sacrificing a PCIe slot for a network card. This is perfect for TrueNAS or Proxmox hosts where network throughput matters for VM storage or media streaming.

With only 4 SATA ports, this board is not ideal for large direct-attached storage arrays. You will need an HBA card or SlimSAS expander for serious NAS builds. I paired mine with an LSI 9211-8i flashed to IT mode and had no issues passing through individual drives to TrueNAS.

The PCIe 5.0 support on the primary slot future-proofs you for next-generation NVMe drives or GPUs. For a home lab board to include this feature at under $450 shows how workstation features are trickling down to enthusiast pricing.

This board shines for content creation workstations that double as virtualization hosts. The Thunderbolt 4 header lets you add fast external storage or capture cards, while the DDR5 ECC support keeps your data safe during long renders. I recommend it for users who prioritize single-threaded performance where Intel still holds an advantage.

The dual 2.5GbE ports with vPro support make this an excellent choice for business use cases where Intel AMT remote management is already part of your infrastructure. If your workplace uses Intel systems, this board integrates more smoothly than AMD alternatives.

ASUS support has a reputation for being difficult to reach, and my experience confirms this. For a server board where reliability matters, this is a concern. The IPMI card also takes up space and complicates cable management in compact builds.

Users on Level1Techs forums report that the BMC can have connection issues requiring firmware updates. Check ASUS support site for the latest BMC firmware before deploying this in a production home lab.

AM5 socket for Ryzen 7000

Dual 10GbE onboard

DDR5 ECC memory support

PCIe 5.0 expansion

1x M.2 PCIe 5.0

Micro-ATX 9.6x9.6

The ASRock Rack B650D4U-2L2T/BCM is the first AM5 server motherboard that brings true enterprise networking to home labs. Dual 10GbE onboard means you can saturate NVMe storage arrays or run high-throughput virtualization without add-in cards. This is the board I would choose for a high-speed NAS build in 2026.

The DDR5 ECC support is welcome, though memory compatibility is more limited than the older X570D4U. I had trouble getting non-QVL modules to POST at first. Stick to qualified memory or be prepared for some BIOS tweaking to get stable operation.

Boot times are frustratingly slow on this board. From power-on to OS load can take 2-3 minutes, which matters when you are rebooting for kernel updates. The IPMI also has reported bugs with DHCP lease renewal that can leave you locked out remotely until a power cycle.

For networking-heavy workloads, this board is unmatched. The dual 10GbE ports can be bonded for 20Gbps aggregate bandwidth or used for separate networks. I run one port for my main LAN and one for a dedicated storage network, which isolates VM traffic from my regular devices.

This board is perfect for high-speed NAS builds where network throughput is the bottleneck. If you are running 10GbE infrastructure at home or plan to upgrade soon, the onboard ports save you $200+ in add-in cards. The PCIe 5.0 support also means this board will handle next-generation GPUs and NVMe drives.

Virtualization hosts benefit from the strong IOMMU groupings. I tested GPU passthrough with a Radeon RX 6600 and it worked without the ACS override patch, which is rare on consumer boards.

If you need more than 4 SATA drives directly connected, look at the W680 NAS boards instead. The limited SATA count means most storage builds will need an HBA. The BIOS maturity issues also make this less suitable for beginners who want a trouble-free setup.

Users on ServeTheHome forums report that some boards ship with BIOS versions that do not support Ryzen 9000 series chips. Verify your CPU compatibility or have a Ryzen 7000 processor available for BIOS updates before deploying.

LGA1700 for 12th/13th/14th Gen

12 SATA via 3x SFF-8643

10GbE + 2x 2.5GbE LAN

DDR5 ECC up to 128GB

3x M.2 NVMe slots

vPro remote management

The Healuck W680 NAS motherboard is purpose-built for storage servers with an incredible 12 SATA ports via SFF-8643 connectors. I built a TrueNAS system with this board and 12 drives without needing any HBA cards, which simplified the build and improved airflow in my compact case.

The triple networking setup gives you flexibility for complex network configurations. I run the 10GbE port for my main workstation connection, one 2.5GbE for management, and the vPro-enabled 2.5GbE for remote access. The Marvell AQC113CS 10GbE chip performed well in my testing, though I recommend verifying driver availability for your chosen operating system.

SFF-8643 connectors with included cables make cable management much cleaner than traditional SATA ports. You run three slim cables to your drive backplane instead of twelve individual SATA cables snaking around the case. This is a small detail that makes a big difference during building and maintenance.

This board is my top recommendation for dedicated NAS builds where storage density matters. The 12 SATA ports handle most home NAS needs without expansion cards, and the 10GbE networking keeps up with NVMe cache performance. I recommend pairing this with an Intel T-series processor for power efficiency in 24/7 operation.

The IOMMU groupings work well for Proxmox users who want to pass through storage controllers or network cards to VMs. I tested passing the 10GbE controller to a pfSense VM and it worked without issues.

Healuck is not a well-known brand in the server space, and long-term reliability is unproven. The BIOS is basic compared to ASRock Rack or ASUS offerings, with limited overclocking or tuning options. You get JEDEC memory speeds only, which is fine for servers but limits performance tweaking.

The lack of physical documentation and uncertain BIOS update availability are red flags for mission-critical deployments. I recommend this for hobbyist labs rather than production environments where downtime is costly.

LGA4677 for Xeon W-2400/W-3400

7x PCIe 5.0 x16 slots

Dual Intel X710-AT2 10GbE

DDR5 R-DIMM ECC up to 2TB

AST2600 BMC IPMI

CEB form factor

The ASUS Pro WS W790 SAGE SE is a monster workstation board for serious home labs that need maximum expansion. Seven PCIe 5.0 slots let you run multiple GPUs for AI/ML training or rendering workloads that would choke lesser boards. I tested this with a Xeon W5-3435X and four GPUs for a machine learning project.

The hardware specs are unmatched: 2TB RAM support, dual 10GbE Intel X710-AT2 controllers, and server-grade IPMI via AST2600 BMC. For raw capability, no other board on this list comes close. But the software experience does not match the hardware excellence.

Users report BIOS instability and ASUS support difficulties that are unacceptable at this price point. When you are spending over $1000 on a motherboard, you expect enterprise-level support. ASUS delivers consumer-grade support at best, with documented cases of frustrating experiences.

Buy this if you need maximum GPU slots for AI/ML training, rendering farms, or scientific computing where PCIe lanes are the bottleneck. The 2TB RAM support also makes this viable for large in-memory databases or analysis workloads. If your alternative is a used enterprise server, this gives you newer components with warranty coverage.

For virtualization hosts with heavy GPU passthrough needs, the IOMMU groupings and ACS support work well. I passed through three GPUs to separate VMs without the override patch, which is impressive at this scale.

The price alone puts this out of reach for most home labs. You could build three complete servers for the cost of this board alone. The CEB form factor also requires specialized cases that cost more than standard ATX options.

Supermicro offers competing Xeon W boards with better BIOS stability and actual enterprise support. Unless you specifically need seven PCIe slots, consider those alternatives for mission-critical deployments.

sTR5 socket for Threadripper PRO 7000

Server-grade AST2600 BMC IPMI

DDR5 R-DIMM ECC up to 2TB

7x PCIe 5.0 x16 slots

10Gb and 2.5Gb dual LAN

4x M.2 PCIe 5.0 slots

The ASUS Pro WS WRX90E-SAGE SE brings AMD’s Threadripper PRO platform to workstation builds with full server management features. I tested this board with a Threadripper PRO 7975WX and the core density is incredible for virtualization hosts. You can run 20+ VMs with dedicated cores and still have headroom.

Four M.2 PCIe 5.0 slots give you room for fast NVMe storage without consuming PCIe slots. I configured two slots as a mirrored ZFS pool for VM storage and still had two slots free for future expansion. The PCIe 5.0 speeds are noticeable when moving large datasets.

The server-grade IPMI via AST2600 BMC should be excellent, but users report stability issues. The web interface can become unresponsive, and the VGA switch on the motherboard must be disabled or you risk boot loops. These are frustrating issues on a $1200+ board.

Firmware updates without CPU or RAM installed is a welcome feature. I flashed the latest BIOS before installing my Threadripper, which eliminated the chicken-and-egg problem of needing an older CPU to update for a newer one.

The USB4 ports are a nice addition for external storage or high-speed peripherals. I connected a USB4 NVMe enclosure and sustained 2800MB/s transfer rates, which is excellent for backup operations.

This board suits high-performance computing tasks like AI training, 3D rendering, or massive virtualization clusters. If you need more cores than a standard Ryzen provides and want server management features, this is one of the few options available.

Content creators doing 8K video editing benefit from the massive memory capacity and fast PCIe storage. The 10GbE networking also helps when working with large media files over the network.

The EEB form factor requires careful case selection. Many “E-ATX” cases do not actually fit EEB boards properly. Measure twice and check forums for confirmed compatible cases before purchasing.

Critical setup note: disable the VGA switch on the motherboard or you may experience boot loops. This is documented in forum posts but not prominently in the manual. The manual also has incorrect CPU power connector guidance, so double-check against online resources.

LGA1700 for 12th/13th/14th Gen

12 SATA via SFF-8643

10GbE AQC113CS plus dual 2.5GbE

DDR5 ECC up to 128GB

3x M.2 NVMe slots

Intel vPro support

Micro-ATX 9.6x9.6

The StoneStorm W680 12-Bay NAS motherboard is my top value pick for dedicated NAS builds in 2026. It combines the storage connectivity of premium boards at a mid-range price point. The 12 SATA ports via SFF-8643 connectors let you build dense storage arrays without expansion cards.

I tested this board with Unraid and it recognized all drives immediately. The BIOS is more stable than the Healuck competitor, with clearer settings for virtualization and IOMMU passthrough. Users report successful Proxmox and TrueNAS deployments as well.

The triple networking gives you options: use the 10GbE for your main connection and the dual 2.5GbE for management and VM networks. Or bond the 2.5GbE ports for 5Gbps aggregate bandwidth. The Intel vPro support on the i226-LM port gives you remote management, though setup is more complex than dedicated BMC solutions.

This is my go-to recommendation for DIY NAS builders who want maximum storage density without breaking the bank. The included SFF-8643 cables save you $30-50, and the DDR5 ECC support protects your data. Pair this with an Intel T-series processor for efficient 24/7 operation.

Unraid users especially appreciate the multiple M.2 slots for cache drives. You can run a dedicated cache pool for fast writes and still have slots left for boot drives or additional storage.

The Marvell AQC113CS 10GbE chip has driver compatibility issues with some operating systems. Verify support for your chosen OS before purchasing. FreeBSD-based systems like TrueNAS Core may need driver updates for full functionality.

Intel vPro remote management requires a compatible CPU with integrated graphics. If you choose an F-series processor without iGPU, you lose remote management capabilities entirely. Factor this into your CPU selection.

LGA2011-3 for Xeon E5 V3/V4

10x SATA 6Gbps ports

4x Intel i226 2.5GbE LAN

6x DDR4 slots up to 384GB

2x M.2 NVMe slots

2x PCIe x16 Gen3

ITX variant 24x24cm form

The HKUXZR C612 NAS motherboard is the cheapest way to get 10 SATA ports and quad 2.5GbE networking on this list. At under $150, it is tempting for budget home labs. But my testing revealed significant issues that make this suitable only for hobbyist experimentation, not production use.

The BIOS has broken ACPI power management, meaning no S3 sleep states and no PCI power management. My test system idled at nearly 100 watts, which costs significantly more to run 24/7 than more efficient options. Over a year, the extra electricity costs could exceed the price difference to a better board.

Four Intel i226 2.5GbE ports provide excellent networking redundancy and aggregation options. This is standout connectivity for the price. But the BIOS issues and lack of updates mean you are buying into a dead-end platform.

Buy this if you are building a lab for learning where downtime does not matter and you want to experiment with Xeon E5 processors cheaply. The 10 SATA ports and quad networking give you plenty to experiment with Linux, ZFS, or virtualization concepts without a major investment.

If you have spare DDR4 ECC memory and an old Xeon E5 processor, this board lets you put them to use for minimal cost. Just do not expect enterprise reliability or power efficiency.

The broken BIOS settings are dangerous. One wrong setting can render the board unbootable without a CMOS clear, and some users report settings that survive CMOS clears requiring firmware reflashing. The vendor is not providing BIOS updates, so these issues will never be fixed.

For any 24/7 server where stability matters, spend the extra $150 for an ASRock Rack board. The power savings alone will pay for the difference over two years of operation.

LGA1155 for Xeon E3-1200

Dedicated IPMI/BMC with separate LAN

ECC DDR3 unbuffered required

6x SATA 4x SATA2 + 2x SATA3

4x PCIe 2.0 x8

Micro-ATX form factor

28-58W typical power draw

The Supermicro X9SCM-F-O is a legend in home lab circles despite being over a decade old. I keep one running as a backup TrueNAS server, and it has been ticking along for 3 years without a single unexpected reboot. The IPMI implementation on this board rivals modern options costing three times as much.

The dedicated BMC draws only 6 watts when the main system is powered down, yet gives you full remote control. You can power on, access BIOS, mount ISO images, and troubleshoot all through the web interface. This is genuine enterprise remote management, not the stripped-down versions on some modern boards.

Power consumption is exceptional: 28 watts idle with a Xeon E3-1230 V2 and four drives, 58 watts under load. For a 24/7 NAS where electricity costs matter, this old platform can actually be cheaper to run long-term than newer, more power-hungry alternatives.

If you need a low-power, reliable NAS for basic file storage and backups, this board delivers. The IPMI alone justifies the price for anyone who has experienced the frustration of a headless server that will not boot. You get genuine remote troubleshooting capabilities.

ESXi users appreciate the certified compatibility. This board works with VMware out of the box, which is rare for consumer hardware. If you are studying for VMware certifications, this gives you an affordable platform for practice.

DDR3 memory is obsolete and increasingly expensive per gigabyte compared to DDR4. The 32GB maximum limits you to basic NAS duties or light virtualization. You will not run extensive Kubernetes clusters or large VMs on this platform.

Buy from reputable sellers only. The inflated price attracts resellers who ship boards with missing I/O shields or stripped screws. Amazon Prime eligibility helps with returns if you get a dud.

Dual LGA2011-3 for Xeon E5 V3/V4

8x DDR4 slots up to 256GB

8x SATA 3.0 ports

2x M.2 NGFF/NVMe slots

2x PCIe 3.0 x16 reinforced

2x Gigabit LAN

E-ATX large form factor

The MACHINIST X99 dual-CPU board is the cheapest way to get 20+ cores in a home lab. At under $140, you can pair this with two Xeon E5-2680 V4 processors for 28 cores and 56 threads. This is compelling for virtualization labs where core density matters more than per-core performance.

The 8 DDR4 slots support up to 256GB of ECC or registered ECC memory. This capacity is excellent for RAM-heavy workloads like large datasets or multiple memory-intensive VMs. I tested with 128GB of DDR4 ECC and all slots recognized correctly on my sample.

Quality control is inconsistent. Reviews mention dead memory slots out of the box and missing accessories like I/O shields. My sample worked fine, but buy with the expectation that you might need to return for a replacement.

This board suits learning environments where you want to experiment with dual-CPU configurations, NUMA topology, and large-memory workloads without enterprise hardware costs. For studying Linux kernel compilation, distributed systems, or parallel computing, the core density is useful.

If you have access to cheap decommissioned Xeon E5 processors from work or eBay, this board lets you put them to use. The TDP adds up with dual CPUs, so factor cooling and power supply capacity into your build.

Gigabit networking is a bottleneck for modern storage and virtualization. You will need to add 10GbE cards if network throughput matters, consuming the PCIe slots that are the main advantage of this board. The lack of IPMI also means no remote management, a dealbreaker for headless servers.

The E-ATX form factor is larger than most cases accommodate. Measure carefully and look for true E-ATX cases, not just ATX cases with “E-ATX compatible” marketing that usually means up to 272mm width, not the full 272mm+ this board needs.

Choosing between these boards requires understanding your specific needs. After building dozens of home lab servers, I have identified the key factors that separate a good purchase from a regret.

IPMI (Intelligent Platform Management Interface) is the standout feature of server motherboards. It provides out-of-band management through a dedicated BMC chip that runs independently of your main system. This means you can power on, access BIOS, mount ISOs, and troubleshoot remotely even when the OS is crashed.

For a server running in a closet, basement, or another room, IPMI eliminates the need to connect a monitor and keyboard for maintenance. Once you experience the convenience of remote BIOS access, you will never want to run a headless server without it.

True IPMI requires a dedicated BMC chip like the AST2400, AST2500, or AST2600 found on ASRock Rack and Supermicro boards. Some Intel boards offer vPro as an alternative, but this requires specific CPUs with integrated graphics and is less capable than dedicated BMC solutions.

Error-Correcting Code (ECC) memory detects and corrects single-bit errors that occur naturally when reading and writing RAM. For a 24/7 server running ZFS or other filesystems that cache data in memory, ECC protects against silent data corruption.

Consumer desktop boards do not support ECC, or only support it in limited configurations. Server motherboards explicitly support ECC, though check whether your chosen board requires registered ECC (RDIMM) or works with unbuffered ECC (UDIMM). RDIMM offers higher capacity but costs more and has slightly higher latency.

For home NAS builds running TrueNAS with ZFS, ECC is strongly recommended. The combination of ZFS checksumming and ECC memory gives you end-to-end data integrity. For pure virtualization hosts where data lives on network storage, ECC is less critical but still beneficial.

The number of SATA ports determines how many drives you can connect directly without expansion cards. For NAS builds, count your planned drives and add two for future expansion. The boards in this guide range from 4 SATA ports to 12.

SFF-8643 connectors, found on the Healuck and StoneStorm NAS boards, provide cleaner cable management than individual SATA ports. You run three slim cables to a backplane rather than twelve individual cables. This improves airflow and makes maintenance easier.

If you need more drives than available ports, you will need an HBA (Host Bus Adapter) card like the LSI 9211-8i or 9300-8i. These add 8 SAS/SATA ports via a single PCIe slot. Factor this into your expansion planning and PCIe slot availability.

Gigabit Ethernet (1GbE) is the baseline and sufficient for most home labs. 2.5GbE provides a meaningful upgrade for NVMe-based storage and multiple simultaneous users. 10GbE is overkill for most homes but essential for high-throughput NAS or virtualization clusters.

Intel NICs generally have better driver support, especially for BSD-based systems like TrueNAS Core. Realtek NICs can work but may require driver updates or have stability issues under heavy load. The ASRock Rack X570D4U includes Intel NICs, while some budget boards use Realtek.

Dual NICs let you configure link aggregation for redundancy or increased bandwidth. They also allow separate networks for management and data, which improves security for exposed services.

Server motherboards come in standard form factors: Mini-ITX, Micro-ATX, ATX, and extended variants like E-ATX and EEB. Match your case to your board size, paying attention to screw hole alignment and expansion slot spacing.

Mini-ITX server boards offer the smallest footprint but limit you to a single PCIe slot. For most home labs, Micro-ATX provides the best balance of expansion capability and case selection. ATX and larger boards require specialized cases but offer maximum expansion.

Drive bay availability matters for NAS builds. Small cases may only hold 4-6 drives, defeating the purpose of a 12-port motherboard. Plan your case selection alongside your motherboard choice to ensure everything fits.

Server motherboards support specific CPU sockets that determine your processor options. Common options include AM4 (Ryzen 2000-5000), AM5 (Ryzen 7000+), LGA1700 (Intel 12th-14th Gen), and LGA2011-3 (Xeon E5 V3/V4).

Check BIOS compatibility before purchasing, especially for newer AM5 boards. Some ship with BIOS versions that do not support Ryzen 9000 processors, requiring an update with an older CPU first. ASRock Rack has a history of slow firmware updates, which frustrates early adopters.

Power consumption varies significantly between platforms. Intel T-series and AMD GE-series processors offer lower TDP for 24/7 efficiency. Old Xeon E5 processors are cheap but power-hungry, potentially costing more in electricity over two years than a modern efficient setup.

For Proxmox virtualization, I recommend a server motherboard with IPMI for remote management, at least 64GB ECC RAM for data integrity, and a modern Ryzen or Intel processor with VT-x/VT-d support. The ASRock Rack X570D4U is my top choice for home Proxmox builds due to its proven stability and IOMMU groupings that work well for GPU passthrough.

Choose based on your primary use case: NAS builds need many SATA ports and ECC memory, virtualization hosts benefit from IPMI and high core count, and firewall/routers prioritize low power and dual NICs. Match the CPU socket to your preferred processor platform, ensure the board has enough PCIe slots for expansion cards, and verify the form factor fits your chosen case.

Both work well with Proxmox. AMD Ryzen offers better value for core count and power efficiency, making it ideal for home labs. Intel provides better single-threaded performance and has broader compatibility with some enterprise features. The ASRock Rack X570D4U with Ryzen is my recommendation for most home Proxmox builds, while Intel boards like the W680-ACE suit users who need specific Intel features like Quick Sync transcoding.

ECC memory is strongly recommended for NAS builds running ZFS or any server storing important data. It protects against silent data corruption by correcting single-bit errors that occur during normal RAM operation. For pure virtualization hosts running temporary VMs, ECC is less critical but still beneficial. Most server motherboards in this guide support ECC, while consumer desktop boards do not.

IPMI (Intelligent Platform Management Interface) provides out-of-band server management through a dedicated BMC chip that runs independently of your main system. It lets you remotely power on/off, access BIOS settings, mount ISO images for OS installation, and troubleshoot even when the operating system is crashed or unresponsive. For headless servers running in basements or closets, IPMI eliminates the need to physically connect a monitor and keyboard for maintenance.

After testing these 10 server motherboards for home lab builds, the ASRock Rack X570D4U remains my top recommendation for most users. Its combination of reliable IPMI, ECC support, 8 SATA ports, and proven 24/7 stability makes it the safest choice for your first (or tenth) home server.

For dedicated NAS builds where storage density matters, the StoneStorm W680 12-Bay NAS delivers exceptional value with 12 SATA ports and 10GbE networking at a mid-range price. The Healuck W680 offers similar features if you can find it in stock, though the StoneStorm has better BIOS stability in my testing.

Budget builders should consider the HKUXZR C612 only for experimentation, not production use. The power consumption and BIOS issues make it expensive to run long-term despite the low purchase price. Instead, save for an ASRock Rack board or consider the legacy Supermicro X9SCM-F-O if you need IPMI on a tight budget.

Remember that the best server motherboards for home lab builds in 2026 depend on your specific use case. A virtualization host has different needs than a NAS or firewall. Match your motherboard to your workload, prioritize IPMI for remote management, and always use ECC memory for data storage. Your future self will thank you when troubleshooting at 2 AM from your couch instead of your basement.